- NextCareerStep

- Posts

- The "ChatGPT for Doctors" That Only Reads Medical Journals

The "ChatGPT for Doctors" That Only Reads Medical Journals

FREE DOWNLOAD: AI Engineer Resume Template, 5 Resume Mistakes Every Working Professional Makes

OpenEvidence: The "ChatGPT for Doctors" That Only Reads Medical Journals

Explore how 400 business leaders are implementing voice AI across industries

FREE DOWNLOAD: AI Engineer Resume Template, 5 Resume Mistakes Every Working Professional Makes

Gemini 3 Pro and Nano Banana 2 Could Launch Together

Baidu's Open-Source AI Model Beats GPT-5 and Gemini

Scientists Create System That Decodes Your Thoughts

Google's "Ask" Button: Talk to Your Photos and YouTube Videos

Google DeepMind's SIMA 2: An AI Gaming Buddy That Learns and Reasons

LinkedIn Gets Smarter: AI-Powered Search Finds People by Description, Not Just Names

OpenEvidence: The "ChatGPT for Doctors" That Only Reads Medical Journals

It learns from peer-reviewed medical research and never hallucinates dangerous health advice. That's OpenEvidence, a startup that's raised $410 million in the last few months and is now valued at $6 billion.

Regular AI models pull information from everywhere including Reddit forums and questionable websites. OpenEvidence only uses verified sources like the Lancet, New England Journal of Medicine, JAMA, and the National Comprehensive Cancer Network. When it gives you an answer, it shows you exactly which papers it's citing so doctors can verify the information.

Doctors are using it to quickly check unfamiliar medications, review updated cardiac protocols, or get information about rare conditions. Instead of spending 15 minutes searching through medical databases, they get answers in seconds with citations to back it up.

The tool is only for verified healthcare professionals (you need to prove you're a doctor to access it), and it's currently free because it's ad-supported. The company wants to avoid "disintermediation"—they believe diagnosis and treatment should stay between doctors and patients, not be replaced by AI.

Explore how 400 business leaders are implementing voice AI across industries

From Hype to Production: Voice AI in 2025

Voice AI has crossed into production. Deepgram’s 2025 State of Voice AI Report with Opus Research quantifies how 400 senior leaders - many at $100M+ enterprises - are budgeting, shipping, and measuring results.

Adoption is near-universal (97%), budgets are rising (84%), yet only 21% are very satisfied with legacy agents. And that gap is the opportunity: using human-like agents that handle real tasks, reduce wait times, and lift CSAT.

Get benchmarks to compare your roadmap, the first use cases breaking through (customer service, order capture, task automation), and the capabilities that separate leaders from laggards - latency, accuracy, tooling, and integration. Use the findings to prioritize quick wins now and build a scalable plan for 2026.

FREE DOWNLOAD: AI Engineer Resume Template

I have create a simple RESUME template for AI engineers with 2-3 years of experience'.

Click the button below get the editable DOC file:

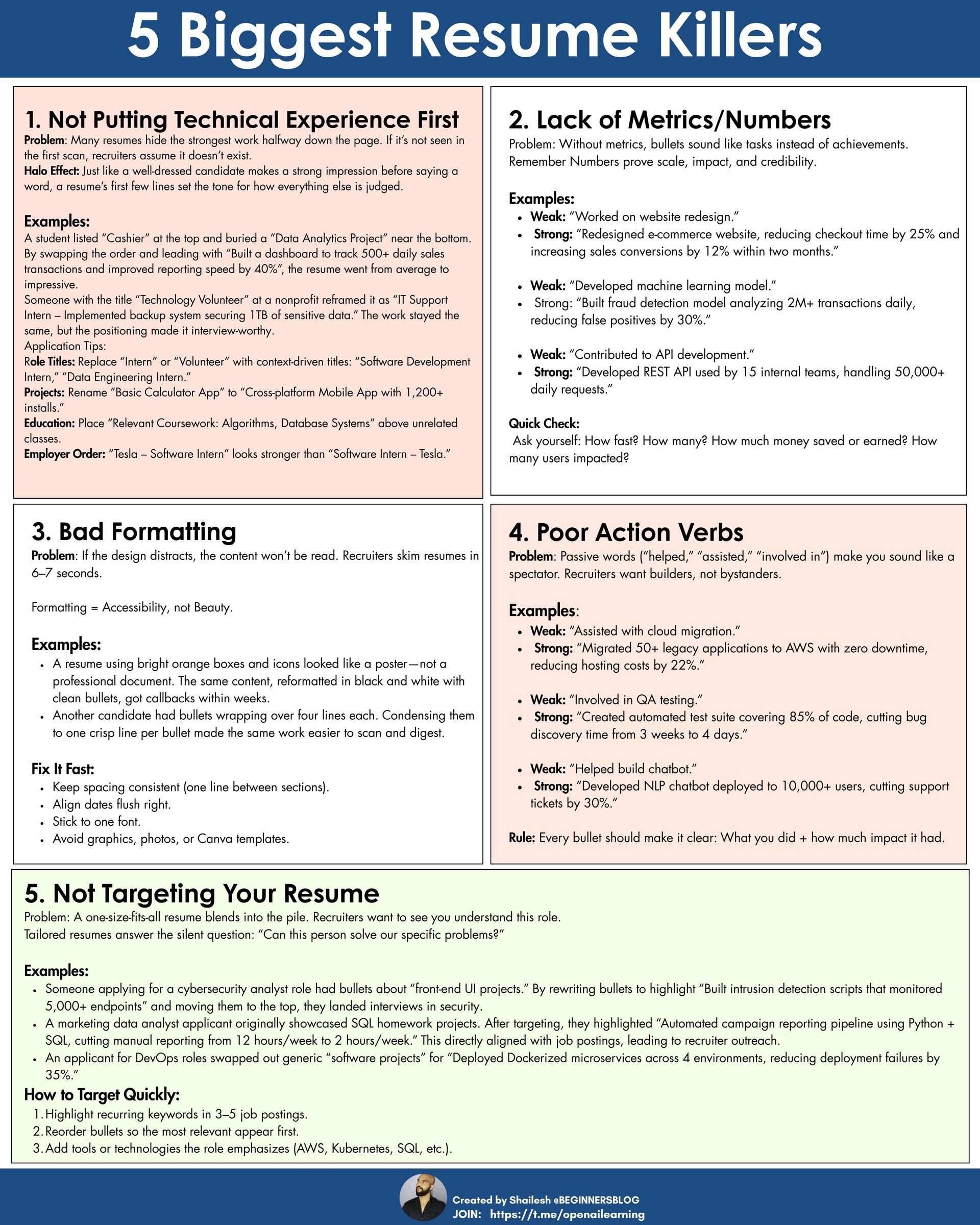

5 Resume Mistakes Every Working Professional Makes

Gemini 3 Pro and Nano Banana 2 Could Launch Together

Google might be gearing up for a double AI launch that could shake things up. Code discovered in the Gemini iPhone app suggests that Gemini 3 Pro and the upgraded Nano Banana 2 image generator are coming together soon—possibly this week.

Gemini 3 cloning entire operating systems like iOS and macOS? That's where Nano Banana 2 comes in. The upgraded image model can create incredibly realistic app interfaces, complete with working buttons, menus, and polished visuals. One user even generated a Windows operating system clone that looked remarkably authentic.

The timing makes sense too. OpenAI just surprised everyone with ChatGPT 5.1, so Google's likely responding with its own big reveal. If the leaked demos are accurate, this could enable "vibe-coding" where anyone can create functional apps just by describing what they want…no technical skills required. The AI would handle everything from design to functionality.

Google's playing catch-up but bringing serious firepower. If Gemini 3 Pro launches with Nano Banana 2, we might finally see AI that can turn your app ideas into reality without you writing a single line of code.

Baidu's Open-Source AI Model Beats GPT-5 and Gemini

China's Baidu just dropped a bombshell in the AI world with ERNIE-4.5-VL-28B-A3B-Thinking (yes, that's actually the name). The company claims this open-source model outperforms both OpenAI's GPT-5 and Google's Gemini on visual reasoning tasks…while using a fraction of the computing power.

The model has 28 billion parameters but only activates 3 billion during operation, making it incredibly efficient. It can understand documents, analyze charts, and reason about videos. In benchmarks, it scored higher than GPT-5 on tasks like analyzing mathematical diagrams and understanding complex charts.

The model includes a feature called "Thinking with Images" that lets it dynamically zoom in on images to read text better or focus on specific details, similar to how humans solve visual problems. It can run on a single 80GB GPU, making it accessible to more companies than competing models that need multiple high-end chips.

Baidu released this under the Apache 2.0 license, meaning anyone can use it commercially for free. The company also unveiled ERNIE 5.0, a proprietary 2.4 trillion-parameter model that handles text, images, audio, and video together, claiming it beats competitors on enterprise tasks.

Scientists Create System That Decodes Your Thoughts

Researchers from UC Berkeley and Japan's NTT Communication Science Laboratories built an AI system that can basically read your mind or at least describe what you're seeing in real-time by analyzing your brain activity.

The AI first learns to connect video clips with written descriptions, creating what researchers call a "meaning signature." Then it learns to match these signatures to brain scans from people watching the videos. Once trained, it can look at your brain activity and generate surprisingly accurate descriptions of what you're seeing.

In tests, when someone watched a video of a person jumping off a waterfall, the AI first guessed "spring flow" but quickly refined it to "a person jumps over a deep water fall on a mountain ridge." It correctly identified the right video from 100 options half the time.

The researchers call this "mind captioning", turning brain activity into text descriptions. The potential applications are huge: helping people with paralysis or speech loss communicate, advancing brain-computer interfaces, or enabling new forms of assistive technology.

It only works with massive MRI scanners and requires your cooperation, so your private thoughts are safe... for now. As one researcher put it, "Nobody has shown you can do that, yet." That word "yet" is doing a lot of work.

Google just rolled out a feature that transforms how you interact with photos and videos. The new "Ask" tool, powered by Gemini AI, lets you have conversations with your content like you're chatting with a friend.

In Google Photos, you can type or speak questions like "Show me photos from my Paris trip" or "Make the sky brighter" and watch it happen instantly. No more scrolling through thousands of photos or wrestling with editing tools—just describe what you want in plain language.

For YouTube, the Ask button appears below videos and can summarize content, answer questions, or explain topics without pausing playback. Want to know "What's this video about?" or "List all the ingredients in this recipe"? Just ask.

Google is also adding templates to create trendy videos automatically, tools to animate photos into short clips, and the ability to remix images into different artistic styles (turn your selfie into anime or a comic book style).

The Ask feature is currently available for users 18+ in the U.S., with plans to expand to over 100 countries and 17 new languages soon. Google emphasizes that these conversations aren't used for ads and are generated in real-time to address privacy concerns.

Google DeepMind's SIMA 2: An AI Gaming Buddy That Learns and Reasons

Remember SIMA, Google's AI that could follow basic instructions in video games? Well, SIMA 2 just leveled up dramatically. This new version doesn't just follow orders…it thinks, reasons, and learns from experience like a gaming companion.

Powered by Gemini, SIMA 2 can understand complex goals, explain its thinking, and even have conversations while playing. Ask it "Why are these fish swimming in circles?" and it'll give you an informed answer. Tell it to "Find a campfire and build a shelter nearby," and it'll break down the task and execute it step by step.

SIMA 2 can play games it's never seen before and even handle newly generated worlds from Genie 3 (Google's world-building AI). It demonstrated "unprecedented adaptability" by figuring out how to navigate and accomplish goals in completely novel environments.

SIMA 2 can improve itself. After failing at tasks, it uses Gemini to analyze what went wrong and tries again, getting better without human intervention. This self-improvement capability is a crucial step toward AI that can learn continuously.

LinkedIn Gets Smarter: AI-Powered Search Finds People by Description, Not Just Names

LinkedIn is finally fixing one of its most frustrating problems: finding the right people. The new AI-powered search lets you describe who you're looking for in plain language instead of guessing job titles and wrestling with filters.

Instead of searching for specific job titles, you can now type things like "Find me investors in the healthcare sector with FDA experience" or "Who in my network can help me understand wireless networks?" The AI understands your intent and finds relevant people even if they don't have those exact keywords in their profiles.

LinkedIn's search bar now says "I'm looking for..." instead of just "Search"—a small but meaningful change that signals the shift from keyword matching to intent-driven discovery. The system ranks results based on how relevant they are to your search and how connected you are to them, making it more likely you'll reach out to second-degree connections rather than strangers.

Currently rolling out to Premium users in the U.S. with plans for global expansion, this feature could transform professional networking from a guessing game into an intuitive conversation.

Custom GPT That Writes Resumes Under 5 Minutes

Crafting a résumé shouldn’t feel like a full-time job. Yet most job seekers spend hours tweaking bullet points, reformatting sections, and rewriting summaries only to get rejected without explanation.

Even worse? Many turn to ChatGPT hoping it will “just write a résumé,” but the results are usually generic, robotic, and instantly ignored by recruiters.

You end up wasting even more time prompting, editing, and re-prompting and still don’t get interviews.

That’s why I built Impact CV GPT. A ChatGPT tool trained specifically on REUSME to help you Generate tailored, ATS-ready resumes in minutes. Quantified, recruiter-friendly, and built for the exact job you want. All in less than 5 minutes.

How much are satisfied with today's newsletter |

Until next time - shailesh and NextStepCareer